3. (15 points) Back propagation. Consider a 1-layer neural net with three input units, 1 output u...

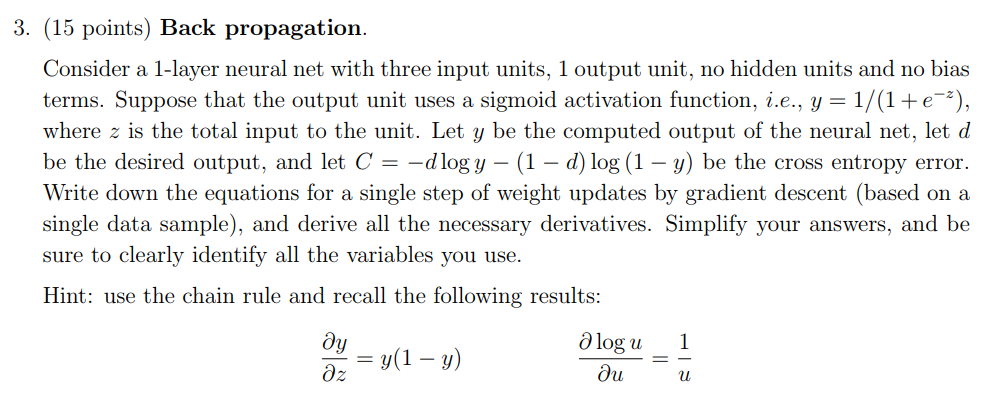

3. (15 points) Back propagation. Consider a 1-layer neural net with three input units, 1 output unit, no hidden units and no bias terms. Suppose that the output unit uses a sigmoid activation function, i.e., y-1/(1 + e-*), where z is the total input to the unit. Let y be the computed output of the neural net, let d be the desired output, and let C =-d logy-(1-d) log (1-y) be the cross entropy error. Write down the equations for a single step of weight updates by gradient descent (based on a single data sample), and derive all the necessary derivatives. Simplify your answers, and be sure to clearly identify all the variables you use. Hint: use the chain rule and recall the following results: Ologu 1 =y(1-y)

Solved

COMPUTER SCIENCE

1 Answer

vikrant pratap singh

Login to view answer.